🇰🇷 韓國篇:用 pykrx 套件打造 KRX 股票清單與日K下載器(含續跑、驗證、黑名單)

這篇教學介紹的是韓國股市(日K)資料擷取模組,主要針對 KRX(韓國交易所)旗下的 KOSPI 與 KOSDAQ 普通股。程式設計上延續日本篇的「單一 Cell 完成」哲學,並加入 pykrx 清單擷取、多線程預篩、單檔下載與續跑機制。

📦 pykrx 套件用途與清單擷取邏輯

我們使用 Python 套件pykrx 擷取最新的 KRX 股票清單。程式會自動安裝並執行以下步驟:- 擷取 KOSPI / KOSDAQ 普通股代碼與公司名稱

- 自動補齊 6 位數代碼(如 005930)

- 標註市場板別:KS 表示 KOSPI,KQ 表示 KOSDAQ

- 清單格式為 (code, name, board),例如:("035420", "NAVER", "KQ")

- 儲存為 kr_list_all.csv,供後續續跑使用

若清單擷取失敗,程式會使用極小預設清單(例如 Samsung、NAVER)作為保底。

🧾 KOSPI / KOSDAQ 的代碼格式與分類方式

KRX 股票代碼為 6 位數,搭配市場分類尾碼:

程式會自動判斷並命名每檔股票的儲存檔案,例如:005930.KS.csv、035420.KQ.csv

🔍 預篩邏輯與黑名單儲存(symbol_blacklist.csv)

為了避免浪費 API 配額,程式會先對每個代碼進行「快驗」:

- 嘗試抓取近 5 日日K資料

- 若失敗,改抓 1y/1mo 或 5y/3mo

- 使用多線程加速驗證(預設 4~6 threads)

通過者會儲存為 kr_prefilter_ok.csv,未通過者會儲存為 kr_symbol_blacklist.csv,避免重複嘗試。

🧨 單檔下載策略與節流風險管理

與美股、港股不同,韓股模組採保守策略:

- 不使用批次下載,改為逐檔下載

- 每檔獨立執行 yfinance.Ticker().history(...)

- 優先使用 period="max" / "10y" 等模式

- 若失敗,改用 start/end 補救

- 每筆下載都會更新 manifest 狀態(done / failed / skipped)

這樣設計可以有效避免 Yahoo Finance 的節流風險(429 Too Many Requests)。

📁 檔案命名與儲存格式

每檔股票會儲存為 <code>.<board>.csv,例如:

- 005930.KS.csv → Samsung Electronics

- 035420.KQ.csv → NAVER

資料夾結構如下:

🧪 抽樣驗證與資料品質檢查

程式執行完畢後,會自動抽樣 20 檔進行資料驗證:

- 檢查欄位是否齊全(date, open, high, low, close, volume)

- 檢查近 90 天是否有交易資料

- 檢查成交量是否合理(至少 5% 的交易日有量)

驗證結果會輸出為統計摘要,例如:

💾 執行參數快照與日誌輸出

每次執行都會自動儲存:

- kr_state.json:記錄執行參數(日期、threads、sample_limit 等)

- download_kr_YYYYMMDD_HHMMSS.txt:執行日誌,記錄下載進度與錯誤訊息

- kr_manifest.csv:記錄每檔股票的狀態(pending / done / failed / skipped)

這些檔案可用於續跑、除錯、比對與版本管理。

# -*- coding: utf-8 -*-

# 🚀 get_kr_stocks_yf_resume.py (Korea, 2025-10 續跑版)

# 功能:

# - 參考 JP 版:新增 Checkpoint/續跑 (Resume) 機制 (list/prefilter/manifest)

# - 調整路徑:使用 kr-share/dayK/lists 結構

# - 預篩:仍使用 KR 版的 quick_symbol_ok(黑白名單)

# - 下載:從多線程改為單檔下載(維持保守策略,避免批次節流問題)

# - 檔名格式:000660.KS.csv / 035420.KQ.csv

import os, io, sys, time, random, logging, warnings, re, subprocess, json

from pathlib import Path

from concurrent.futures import ThreadPoolExecutor, as_completed

from tqdm import tqdm

import pandas as pd

import numpy as np

# ====== 免責聲明 ======

DISCLAIMER = """

【免責聲明 / Disclaimer】

1) 本程式僅供研究與教學,不構成投資建議;使用風險自負。

2) 清單與行情來自第三方(KRX/pykrx、Yahoo Finance),可能因延遲、下市/停牌、API 節流而有遺漏或錯誤。

3) 僅嘗試下載 KOSPI / KOSDAQ 普通股。若有板別調整/代碼變更,請以官方為準。

4) 結果僅作參考,切勿作為投資決策唯一依據。

"""

print(DISCLAIMER.strip())

# ====== 安裝/匯入套件 ======

def ensure_pkg(pkg: str):

try:

__import__(pkg)

return True

except ImportError:

print(f"🔧 未安裝 {pkg},正在安裝…")

r = subprocess.run([sys.executable, "-m", "pip", "install", "-q", pkg], capture_output=True, text=True)

if r.returncode != 0:

print(r.stderr)

raise RuntimeError(f"安裝 {pkg} 失敗")

__import__(pkg)

return True

ensure_pkg("yfinance")

ensure_pkg("pykrx")

import yfinance as yf

from pykrx import stock as krx

# ====== 降噪 ======

for lg in ["yfinance", "urllib3", "requests"]:

logging.getLogger(lg).setLevel(logging.CRITICAL)

logging.getLogger(lg).propagate = False

warnings.filterwarnings("ignore")

# ========== 參數與路徑定義 (Adjusted to kr-share structure) ==========

# 參數

MARKET_CODE = "kr-share" # 資料夾名稱

DATA_SUBDIR = "dayK" # 日K子資料夾名

PROJECT_NAME = "韓股日K資料下載器" # 專案名稱(用於 Log)

# ====== Colab Drive or local ======

try:

from google.colab import drive

print("🔗 正在掛載 Google Drive...")

# drive.mount('/content/drive', force_remount=False) # JP code has this line, KR code should keep it.

try:

drive.mount('/content/drive', force_remount=False)

except:

pass # Allow retry/skip if already mounted or failed

print("✅ Drive 已掛載")

BASE_DIR = '/content/drive/MyDrive/各國股票檔案' # 可自行改

except Exception:

print("⚠️ 非 Colab 環境,使用 ./data")

BASE_DIR = os.path.abspath("./data")

# 調整後的路徑

BASE_MARKET_DIR = f"{BASE_DIR}/{MARKET_CODE}"

DATA_DIR = f'{BASE_MARKET_DIR}/{DATA_SUBDIR}' # 儲存 CSV 檔案

LIST_DIR = f'{BASE_MARKET_DIR}/lists' # 儲存清單與 Checkpoint

LOG_PARENT_DIR = f"{BASE_DIR}/Log" # 使用與 JP 相似的 Log 父目錄

LOG_DIR = f'{LOG_PARENT_DIR}/{PROJECT_NAME}' # 儲存 Log

os.makedirs(DATA_DIR, exist_ok=True)

os.makedirs(LOG_DIR, exist_ok=True)

os.makedirs(LIST_DIR, exist_ok=True)

ts_tag = pd.Timestamp.now().strftime("%Y%m%d_%H%M%S")

LOG_FILE = f'{LOG_DIR}/download_kr_{ts_tag}.txt'

# ====== 參數(可視情況調整) ======

START_DATE = "2000-01-01"

END_DATE = "2099-12-31" # 改為遠未來,同 JP 版

THREADS = 4 # 保守,避免節流(單檔下載仍可用 ThreadPoolExecutor)

SAMPLE_LIMIT = None # 測試可設小數;None = 全部

PAUSE_SEC = 0.0 # 單檔下載策略足夠保守,先不加批次暫停

# Checkpoint / Resume 旗標 (設回續跑模式)

FORCE_REFRESH_LIST = True # False: 讀取已存在的清單

FORCE_REFILTER = True # False: 讀取已存在的預篩結果

FORCE_REBUILD_MANIFEST = True # False: 讀取 Manifest,自動續跑

# Checkpoint 檔案

LIST_CSV = Path(LIST_DIR) / "kr_list_all.csv"

PREF_OK_CSV = Path(LIST_DIR) / "kr_prefilter_ok.csv"

MANIFEST_CSV = Path(LIST_DIR) / "kr_manifest.csv" # 狀態檔(resume 用)

STATE_JSON = Path(LIST_DIR) / "kr_state.json" # 紀錄一些執行參數

print(f"\n📂 目錄:")

print(f" BASE_DIR = {BASE_DIR}")

print(f" LIST_DIR = {LIST_DIR}")

print(f" {MARKET_CODE}/{DATA_SUBDIR} = {DATA_DIR}")

print(f" logs = {LOG_DIR}")

# ====== 輔助函數 (從 KR 版繼承,部分修改) ======

def log(msg: str):

with open(LOG_FILE, "a", encoding="utf-8") as f:

f.write(f"{pd.Timestamp.now():%Y-%m-%d %H:%M:%S}: {msg}\n")

print(msg)

def map_symbol_kr(code: str, board: str = "KS") -> str:

code = str(code).zfill(6)

suffix = ".KS" if str(board).upper() == "KS" else ".KQ"

return f"{code}{suffix}"

# 繼承 KR 版 standardize_df

def standardize_df(df: pd.DataFrame) -> pd.DataFrame:

if df is None or df.empty:

return pd.DataFrame()

df = df.reset_index()

if 'Date' not in df.columns:

first_col = df.columns[0]

if str(first_col).lower().startswith("date"):

df.rename(columns={first_col: 'Date'}, inplace=True)

else:

return pd.DataFrame()

df['date'] = pd.to_datetime(df['Date'], errors='coerce', utc=True)

for _ in range(2):

try:

df['date'] = df['date'].dt.tz_convert(None)

except Exception:

try:

df['date'] = df['date'].dt.tz_localize(None)

except Exception:

pass

df = df.rename(columns={'Open':'open','High':'high','Low':'low','Close':'close','Volume':'volume'})

req = ['date','open','high','low','close','volume']

if not all(c in df.columns for c in req):

return pd.DataFrame()

df = df.dropna(subset=['date'])

for c in ['open','high','low','close','volume']:

df[c] = pd.to_numeric(df[c], errors='coerce')

df = df.dropna(subset=['open','high','low','close','volume'])

df = df[df['volume'] > 0]

df = df[(df['date'] >= pd.to_datetime(START_DATE)) & (df['date'] <= pd.to_datetime(END_DATE))]

df = df.sort_values('date').reset_index(drop=True)

return df[req]

# 繼承 KR 版 safe_history (period 優先策略)

def safe_history(symbol: str, start: str, end: str, interval="1d"):

periods = ["max", "10y", "5y", "2y", "1y"]

for i, p in enumerate(periods):

try:

tk = yf.Ticker(symbol)

df = tk.history(period=p, interval=interval, auto_adjust=False)

if df is not None and not df.empty:

return df

time.sleep(0.5 + 0.2*i + random.uniform(0, 0.5))

except Exception as e:

if any(k in str(e).lower() for k in ["too many requests", "429", "rate"]):

time.sleep(3.0 + i + random.uniform(0, 2.0))

else:

time.sleep(0.5 + 0.2*i + random.uniform(0, 0.5))

try:

df = yf.Ticker(symbol).history(start=start, end=end, interval=interval, auto_adjust=False)

if df is not None and not df.empty:

return df

except Exception as e:

log(f"[safe_history] {symbol} start/end error: {e}")

return None

# 繼承 KR 版 quick_symbol_ok

def quick_symbol_ok(symbol: str) -> bool:

"""輕量驗證:近 5 日日K → 1y/1mo → 5y/3mo"""

try:

tk = yf.Ticker(symbol)

try:

df = tk.history(period="5d", interval="1d", auto_adjust=False)

if df is not None and not df.empty:

return True

except Exception:

pass

for per, itv in [("1y","1mo"), ("5y","3mo")]:

try:

df2 = tk.history(period=per, interval=itv, auto_adjust=False)

if df2 is not None and not df2.empty:

return True

except Exception:

pass

return False

except Exception:

return False

# 繼承 KR 版 is_valid_csv (並使用新 DATA_DIR 結構的檔案路徑)

def is_valid_csv(code, board) -> bool:

file_path = f"{DATA_DIR}/{code}.{board}.csv"

try:

df = pd.read_csv(file_path)

req = ['date','open','high','low','close','volume']

if not all(c in df.columns for c in req):

return False

# 簡單驗證日期範圍

df['date'] = pd.to_datetime(df['date'], errors='coerce')

df = df.dropna(subset=['date'])

if df.empty:

return False

if df['date'].min() > pd.to_datetime(START_DATE) + pd.Timedelta(days=365): # 至少一年後開始

return False

return True

except Exception:

return False

# ====== 取得韓股清單(pykrx) (從 KR 版繼承,部分修改) ======

def get_kr_list_fresh():

"""

回傳:[ (code, name, board), ... ]

board: KS = KOSPI, KQ = KOSDAQ

"""

today = pd.Timestamp.today().strftime("%Y%m%d")

lst = []

for mk, bd in [("KOSPI","KS"), ("KOSDAQ","KQ")]:

try:

tickers = krx.get_market_ticker_list(today, market=mk)

for t in tickers:

name = krx.get_market_ticker_name(t)

code = str(t).zfill(6)

lst.append((code, name, bd))

except Exception as e:

log(f"❌ pykrx 取得 {mk} 清單失敗: {e}")

if not lst:

# 極小預設 (保底)

log("⚠️ 清單不足,使用極小預設")

return [("005930", "Samsung Electronics", "KS"), ("035420", "NAVER", "KQ")]

return lst

def get_kr_list():

"""優先讀 LIST_CSV;必要時刷新。"""

if (not FORCE_REFRESH_LIST) and LIST_CSV.exists():

try:

df = pd.read_csv(LIST_CSV)

rows = list(zip(df["code"].astype(str), df["name"].astype(str), df["board"].astype(str)))

print(f"📄 使用現有清單:{LIST_CSV}({len(rows)} 檔)")

return rows

except Exception:

print("⚠️ 讀取舊清單失敗,將刷新")

rows = get_kr_list_fresh()

pd.DataFrame(rows, columns=["code","name","board"]).to_csv(LIST_CSV, index=False)

print(f"💾 清單已保存:{LIST_CSV}({len(rows)} 檔)")

return rows

# ====== 預篩(快驗+黑名單儲存) (從 KR 版繼承,多線程執行) ======

def prefilter_symbols_kr(shares, max_workers=6):

valid, invalid = [], []

with ThreadPoolExecutor(max_workers=max_workers) as ex:

futs = {ex.submit(quick_symbol_ok, map_symbol_kr(code, board)): (code, name, board)

for code, name, board in shares}

# 使用 tqdm 顯示進度條

for f in tqdm(as_completed(futs), total=len(shares), desc="KR 預篩中", unit="檔"):

ok = False

item = futs[f]

try:

ok = f.result()

except Exception:

ok = False

(valid if ok else invalid).append(item)

if invalid:

pd.DataFrame(invalid, columns=["code","name","board"]).to_csv(Path(LIST_DIR)/"kr_symbol_blacklist.csv", index=False)

log(f"✅ KR 快驗通過 {len(valid)} 檔;剔除 {len(invalid)} 檔({len(invalid)/max(1,len(shares)):.1%})")

return valid

def get_prefilter_ok(rows_all):

"""優先讀 PREF_OK_CSV;必要時重做預篩。"""

if (not FORCE_REFILTER) and PREF_OK_CSV.exists():

try:

df = pd.read_csv(PREF_OK_CSV)

rows = list(zip(df["code"].astype(str), df["name"].astype(str), df["board"].astype(str)))

print(f"📄 使用現有預篩結果:{PREF_OK_CSV}({len(rows)} 檔)")

return rows

except Exception:

print("⚠️ 讀取舊預篩結果失敗,將重做")

ok_rows = prefilter_symbols_kr(rows_all, max_workers=THREADS)

pd.DataFrame(ok_rows, columns=["code","name","board"]).to_csv(PREF_OK_CSV, index=False)

print(f"💾 預篩結果已保存:{PREF_OK_CSV}({len(ok_rows)} 檔)")

return ok_rows

# ====== Manifest:逐檔狀態檔(resume 用) (從 JP 版繼承,修改欄位) ======

def build_manifest(ok_rows):

"""建立或讀取 manifest。欄位:code,name,board,status,last_error,last_try"""

if (not FORCE_REBUILD_MANIFEST) and MANIFEST_CSV.exists():

mf = pd.read_csv(MANIFEST_CSV)

need_cols = {"code","name","board","status","last_error","last_try"}

if need_cols.issubset(set(mf.columns)):

print(f"📄 讀取現有 manifest:{MANIFEST_CSV}({len(mf)} 列)")

# 檢查是否有新增的代碼,如果有則加入

existing_codes = set(mf['code'].tolist())

new_rows = [r for r in ok_rows if r[0] not in existing_codes]

if new_rows:

new_mf = pd.DataFrame(new_rows, columns=["code","name","board"])

new_mf["status"] = "pending"

new_mf["last_error"] = ""

new_mf["last_try"] = ""

mf = pd.concat([mf, new_mf], ignore_index=True)

print(f"➕ 發現 {len(new_rows)} 筆新增代碼,已加入 manifest")

return mf

else:

print("⚠️ 舊 manifest 欄位不完整,將重建")

# 新建

mf = pd.DataFrame(ok_rows, columns=["code","name","board"])

mf["status"] = "pending" # pending / done / failed / skipped

mf["last_error"] = ""

mf["last_try"] = ""

# 已存在檔案標記為 done

have_files = os.listdir(DATA_DIR)

have_codes_boards = set()

for f in have_files:

if f.endswith(".csv"):

parts = f.split(".")

if len(parts) == 3 and parts[1] in ["KS", "KQ"]:

have_codes_boards.add((parts[0], parts[1]))

def is_done(row):

return (row["code"], row["board"]) in have_codes_boards

# 先用 loc 標記已存在的檔案

mf.loc[mf.apply(is_done, axis=1), ["status","last_error","last_try"]] = ["done","","auto-detected"]

save_manifest(mf)

print(f"💾 新建 manifest:{MANIFEST_CSV}({len(mf)} 列,已有 {(mf['status']=='done').sum()} 檔標記 done)")

return mf

def save_manifest(mf):

mf.to_csv(MANIFEST_CSV, index=False)

# ====== 單檔下載(取代批次下載邏輯) ======

def fetch_kr_stock_resume(row, mf_idx):

code, name, board = row['code'], row['name'], row['board']

symbol = map_symbol_kr(code, board)

out_csv = f"{DATA_DIR}/{code}.{board}.csv"

status = "done" # 假設成功,如果失敗再改

error = ""

# 檢查是否已完成 (由於 resume_download_loop 會排除 'done' 的項目,這裡只處理 pending/failed/skipped)

if os.path.exists(out_csv) and is_valid_csv(code, board):

# 如果是 skipped/failed 但檔案已存在且有效,直接標記 done

status = "done"

error = "auto-corrected"

else:

# 下載

df = safe_history(symbol, START_DATE, END_DATE, "1d")

if df is None:

status = 'failed'

error = 'history_none'

else:

df = standardize_df(df)

if df.empty:

status = 'failed'

error = 'empty_df'

else:

try:

df.to_csv(out_csv, index=False)

status = 'done'

error = ''

except Exception as e:

status = 'failed'

error = f'save_error: {e}'

return {'mf_idx': mf_idx, 'code': code, 'status': status, 'last_error': error}

def resume_download_loop(mf):

# 只挑選需要下載的項目:pending 或 failed (skipped 項目如果檔案存在會被 build_manifest 標記為 done)

need_to_fetch = mf[mf["status"].isin(["pending", "failed"])].copy()

if need_to_fetch.empty:

log("✅ 無需下載:manifest 已全部完成。")

return

log(f"📝 續跑開始,需下載/重試 {len(need_to_fetch)} 檔。")

results = []

# 使用多線程進行單檔下載,提高效率

with ThreadPoolExecutor(max_workers=THREADS) as ex:

futs = {ex.submit(fetch_kr_stock_resume, row, idx): (idx, row)

for idx, row in need_to_fetch.iterrows()}

for f in tqdm(as_completed(futs), total=len(futs), desc="KR 下載中", unit="檔"):

res = f.result()

# 更新 manifest

idx = res['mf_idx']

mf.loc[idx, "status"] = res['status']

mf.loc[idx, "last_error"] = str(res['last_error'])

mf.loc[idx, "last_try"] = pd.Timestamp.now().strftime("%Y-%m-%d %H:%M:%S")

# 每 50 筆更新一次 manifest 檔案

if len(results) % 50 == 0:

save_manifest(mf)

results.append(res)

# 最終儲存 manifest

save_manifest(mf)

# ====== 簡單驗證 (從 JP 版繼承,修改路徑) ======

def quick_validate_samples(out_dir, sample_k=20):

import glob

files = glob.glob(os.path.join(out_dir, "*.csv"))

if not files:

return {"files": 0, "ok": 0, "bad": 0, "notes": "no files"}

# 只檢查最近下載成功的

# 這裡假設 MANIFEST_CSV 存在且有 board 欄位

try:

mf = pd.read_csv(MANIFEST_CSV)

done_items = mf[mf['status'] == 'done'][['code', 'board']].values.tolist()

except:

done_items = []

# 創建一個檔案列表,用於抽樣,但這次我們直接對所有已下載的 CSV 進行抽樣

pick = random.sample(files, min(sample_k, len(files)))

ok, bad = 0, 0

for p in pick:

try:

d = pd.read_csv(p)

if not set(["date","open","high","low","close","volume"]).issubset(d.columns):

bad += 1; continue

d["date"] = pd.to_datetime(d["date"], errors="coerce")

d = d.dropna(subset=["date"])

d = d.sort_values("date")

if d.empty:

bad += 1; continue

# 檢查最後日期是否太舊 (超過 90 天)

if (pd.Timestamp.now().tz_localize(None) - d["date"].iloc[-1]) > pd.Timedelta(days=90):

bad += 1; continue

# 檢查是否有成交量

if (d["volume"] > 0).mean() < 0.05: # 至少 5% 的交易日有量

bad += 1; continue

ok += 1

except Exception:

bad += 1

return {"files": len(files), "ok": ok, "bad": bad, "notes": f"sample={len(pick)}"}

# ====== 主流程 (修改以使用 Checkpoint 機制) ======

def main():

print("\n🚀 韓國 KRX 股票下載開始(含續跑機制)")

# 1) 清單(可復用或刷新)

rows_all = get_kr_list()

if SAMPLE_LIMIT:

rows_all = rows_all[:SAMPLE_LIMIT]

log(f"🧾 讀到代碼數:{len(rows_all)}")

# 2) 預篩(可復用或重做)

ok_rows = get_prefilter_ok(rows_all)

if not ok_rows:

log("🚫 預篩後無可下載標的。")

return

# 3) 建/讀 manifest(pending/done/failed/skipped)

mf = build_manifest(ok_rows)

# 4) 續跑-只補未完成

resume_download_loop(mf)

# 5) 統計輸出

mf = pd.read_csv(MANIFEST_CSV)

tot = len(mf)

done = int((mf["status"]=="done").sum())

failed = int((mf["status"]=="failed").sum())

pending = int((mf["status"]=="pending").sum())

skipped = int((mf["status"]=="skipped").sum())

log(f"📊 狀態統計:total={tot}, done={done}, failed={failed}, pending={pending}, skipped={skipped}")

have = len([f for f in os.listdir(DATA_DIR) if f.endswith(".csv")])

log(f"🎉 完成\n ✅ 檔案目錄:{DATA_DIR}\n 📝 日誌:{LOG_FILE}\n 📦 產出檔案:{have} 檔")

# 6) 抽樣驗證

chk = quick_validate_samples(DATA_DIR, sample_k=20)

log(f"🔎 抽樣驗證:files={chk['files']}、ok={chk['ok']}、bad={chk['bad']}({chk['notes']})")

# 7) 存下執行參數(方便日後比對)

with open(STATE_JSON, "w", encoding="utf-8") as f:

json.dump({

"ts": ts_tag,

"start_date": START_DATE,

"end_date": END_DATE,

"threads": THREADS,

"sample_limit": SAMPLE_LIMIT

}, f, ensure_ascii=False, indent=2)

print(f"💾 參數快照:{STATE_JSON}")

if __name__ == "__main__":

main()

🏁 結語與預告

這套韓國模組延續了「穩定、可續跑、可驗證」的核心理念,並針對 KRX 市場設計了 pykrx 清單擷取與保守下載策略,適合用來建立韓股歷史資料庫或作為金融資料處理的學習範例。

-----------------------------------------------------------------------------------------------------

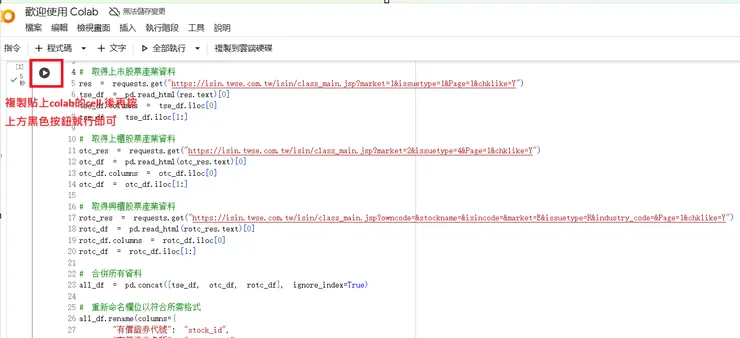

將上方程式碼逐個貼上colab cell執行即可。預設會在goole driver建立資料夾存放日K檔案。

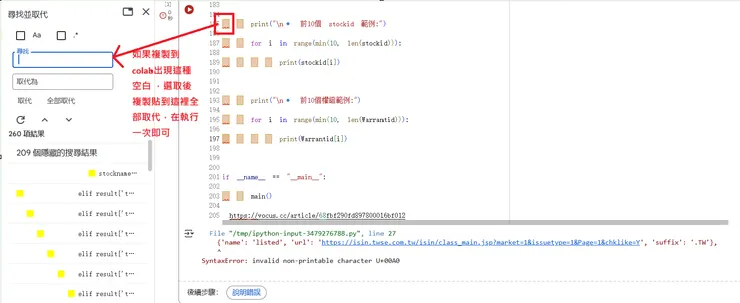

如果複製程式碼貼到colab上方會出現如下空白,導致執行後發生錯誤訊息

File "<tokenize>", line 205 IndentationError: unindent does not match any outer indentation level

請選擇該處空白選取候用取代方式全部取代,再次執行即可

-----------------------------------------------------------------------------------------------------

🧑🔬 作者身份與非專業聲明|AUTHOR'S STATUS AND INTENT 本報告的作者為獨立的、業餘數據研究愛好者,非專業量化分析師,亦不具備任何持牌金融顧問資格。本專題報告是作者利用全職工作外的個人時間完成。 The author of this report is an independent, amateur data researcher and NOT a professional quantitative analyst or a licensed financial advisor. This work is completed in the author's personal free time for statistical research purposes.

📊 數據來源與品質限制|DATA SOURCE LIMITATION 本報告所有歷史價格數據均來自免費公共資源(如 Yahoo Finance)。雖然作者已通過 V4.0 QA 系統盡力檢查並排除明顯錯誤,但由於數據源限制,作者不保證數據 100% 無誤。 All data is sourced from free public providers (e.g., Yahoo Finance). While the author uses the V4.0 QA System to minimize errors, the author offers NO WARRANTY of 100% accuracy. Data integrity is constrained by the free source.

🚫 無投資建議聲明|NO INVESTMENT ADVICE 本文內容、圖表及 AI 分析結果僅供研究參考與教學啟發之用,不構成任何投資買賣建議、諮詢或招攬。所有分析僅描述歷史統計規律。 This content is for statistical research and educational inspiration only. It does NOT constitute personalized financial advice, investment recommendations, or a solicitation to buy or sell securities.

⚠️ 風險與責任劃分|RISK & LIABILITY 股票市場投資涉及重大風險。您應自行判斷並承擔所有投資風險。作者(和平台)對您基於本報告所做出的任何投資決策和潛在損失,不承擔任何責任。 Stock market investing involves significant risk. The reader must exercise their own judgment. The author (and the platform) assumes NO LIABILITY for any financial losses incurred based on the information provided herein.