Web Scraping: A Key Tool in Data Science

Core Insights

Here are the three main takeaways from this lesson:

- Web Scraping is the Bridge from Unstructured to Structured Data: It is a vital technique in data science used to automatically extract large amounts of messy data from websites and convert it into an organized format for analysis, machine learning, and real-time applications.

- Python Provides Powerful, Specialized Tools: Python is highly effective for this task thanks to libraries tailored for different scraping needs: BeautifulSoup for parsing HTML/XML, Scrapy for extensive web crawling, and Selenium for browser automation.

- Great Utility Requires Ethical Responsibility: While web scraping powers everyday applications like price comparisons and social media trend tracking, it must be performed responsibly by respecting a website's terms of use and its rules.

Jargon Explained in Plain Language

- Web Scraping / Web Harvesting: Imagine hiring a super-fast robot to read thousands of web pages and copy only the specific text you want into a spreadsheet. That automated process is web scraping.

- Unstructured Data: Information that is free-flowing and messy, like the text, images, and layout code of a normal webpage. It doesn't fit neatly into rows and columns.

- Structured Form: Information that is neatly organized—like a well-labeled Excel spreadsheet or a database. Computers can easily analyze this kind of data.

- Parse Tree: A behind-the-scenes map created by a program (like BeautifulSoup) that breaks down a webpage's messy code into a simple, hierarchical "family tree," making it much easier to locate specific words or data.

- Web Crawling Framework: A ready-made toolkit (like Scrapy) for programmers. It helps build automated "spiders" that can jump from link to link across a website, gathering data continuously.

- Robots.txt: A digital "rulebook" or "Do Not Enter" sign posted by a website owner. It tells automated robots and web scrapers which parts of the website they are allowed to look at and which parts they must stay away from.

Structured Summary

Introduction to Web Scraping

Web scraping, also known as web data extraction, is the process of taking vast amounts of unstructured data from websites and converting it into a structured form that can be easily analyzed.

The Role of Web Scraping in Data Science

Web scraping is an integral part of the data science workflow. Its primary purposes include:

- Data Collection: Serving as the main method to gather internet data for research and deep analysis.

- Machine Learning: Supplying the massive datasets required to train machine learning models.

- Real-time Applications: Providing live information for services that require constant updates, such as weather forecasts.

Key Python Libraries

Python makes web scraping efficient through several specialized libraries:

- BeautifulSoup: Pulls data out of HTML and XML files by creating an easily readable "parse tree" from the webpage's source code.

- Scrapy: An open-source framework designed specifically for collaborative web crawling and large-scale data extraction.

- Selenium: A tool that actually controls and automates web browsers through programming, mimicking how a real human interacts with a webpage.

Practical Applications

The ability to turn the web into a workable dataset has many real-world applications:

- Price Comparison: Gathering product data from multiple online stores to compare prices (e.g., using services like ParseHub).

- Social Media Scraping: Collecting data from platforms like Twitter to identify and analyze trending topics.

- Email Gathering: Collecting contact information for marketing and bulk email purposes.

Conclusion and Ethical Considerations

Web scraping is an essential skill in data science that unlocks the internet as a massive data source. However, practitioners must act ethically by respecting the target website's terms of service and strictly following the allowances outlined in the site's robots.txt file.

HTML for Webscraping

This lesson provides an introductory overview of Hypertext Markup Language (HTML) specifically tailored for the purpose of web scraping, which is the process of extracting useful data from websites using programming languages like Python.

Core Points of the Lesson

- HTML is the Foundation of Web Data: Websites are built using HTML tags that tell a browser how to display content, and understanding these tags is essential for extracting data.

- The Power of Python: With a basic understanding of HTML structure, tools like Python can be used to automatically pull specific information, such as player salaries or real estate prices, from a page.

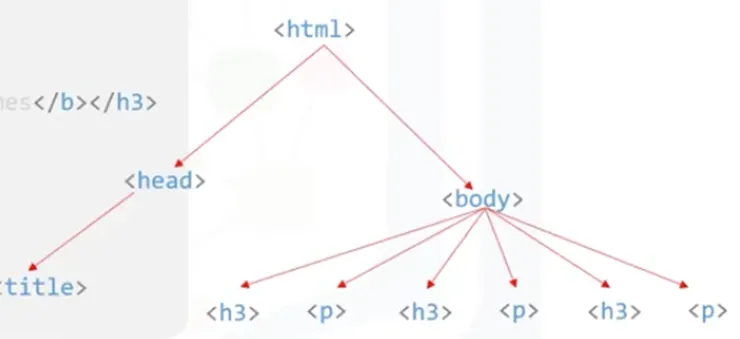

- Document Hierarchy: HTML is organized in a "tree" structure where tags are nested within each other, creating parent, child, and sibling relationships.

- Targeting Specific Tags: Data is usually stored within specific elements like headings (<h3>), paragraphs (<p>), or table cells (<td>), which scrapers use as "addresses" to find information.

- Browser Inspection: Modern browsers allow users to right-click any part of a webpage and "Inspect" the underlying HTML code to see how the data is structured.

Terminology Explanation

- Web Scraping: The process of using a computer program to automatically "read" a website and grab specific pieces of information from it.

- Tags: These are the "labels" of the web. They are pieces of text surrounded by angle brackets (like <html>) that tell the browser what a piece of content is, such as a heading or a link.

- Element: An element is a complete "package" consisting of a start tag, the actual content (like a person's name), and an end tag.

- Attribute: This is extra information hidden inside a start tag. For example, in a link, the attribute tells the browser exactly which web address to go to.

- Root Element: Think of this as the "trunk" of the tree. In HTML, the <html> tag is the root because every other part of the page lives inside it.

Structured Summary

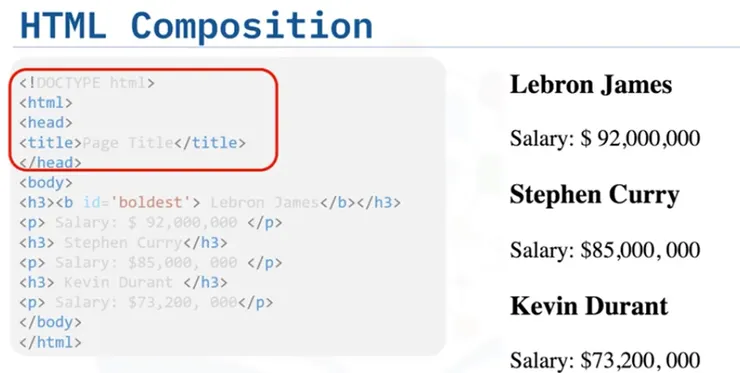

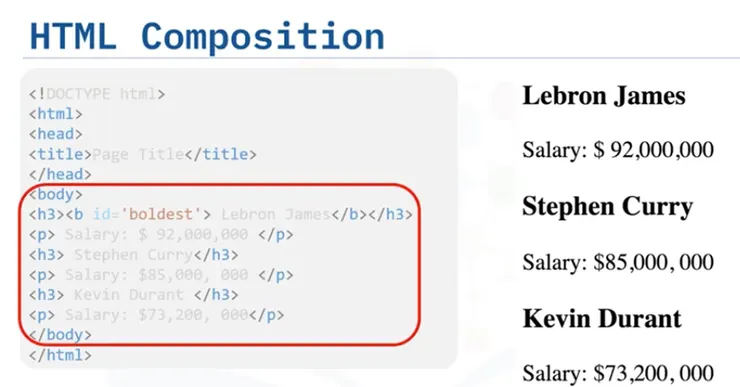

1. Basic HTML Composition

A standard HTML document is divided into two main sections:

- The Head (<head>): Contains meta-information about the page that isn't usually visible to the user.

- The Body (<body>): This is where the actual visible content of the page lives, such as text, images, and the data typically targeted for scraping.

2. The Anatomy of an HTML Tag

Using the Anchor Tag (<a>)—which creates hyperlinks—as an example, a tag consists of several parts:

- Start and End Tags: The start tag (e.g., <a>) marks the beginning, and the end tag (e.g., </a>) marks the finish.

- Content: The text that appears on the screen for the user to see.

- Attributes: Components like href define the destination URL of a link.

3. Understanding the HTML Tree

HTML documents are structured like a family tree:

- Parents and Children: If a tag is inside another tag, the outer one is the "parent" and the inner one is the "child".

- Siblings: Tags that are at the same level of nesting (like a list of player names) are called siblings.

- Descendants: Any tag nested anywhere inside a parent, even deep down, is considered a descendant.

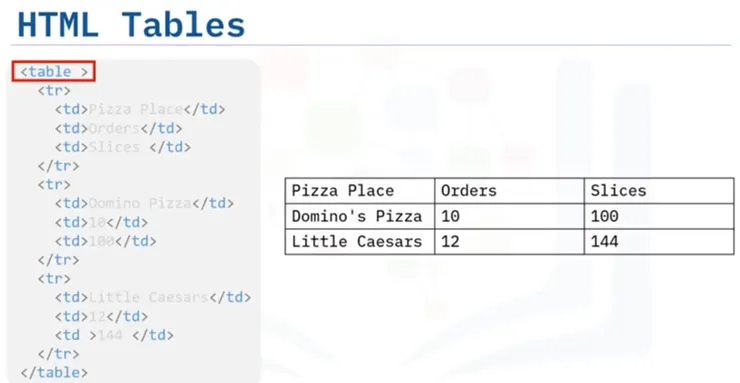

4. HTML Tables

Tables are a common way to store structured data on the web:

- <table>: Defines the start of the table area.

- <tr> (Table Row): Used to create a new horizontal row of data.

- <td> (Table Data): Defines an individual cell within a row where the actual data (like a number or name) is stored.